Test View¶

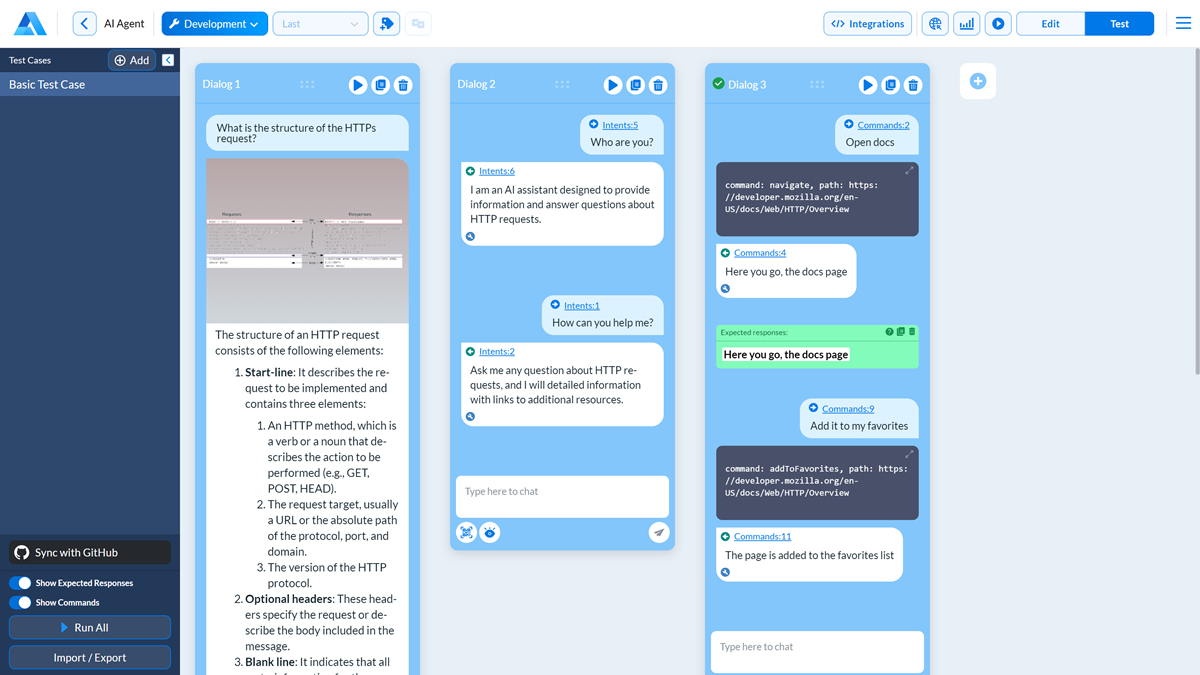

To automate dialog testing, use the Test View in Alan AI Studio. The Test View lets you create test cases and repeatedly run them against new versions of the dialog script. With test cases, you can quickly validate your dialog at any stage of the development process and make sure all requirements to user queries and the dialog flow are met.

Note

The Test View lets you validate queries handled with data corpuses and intents.

In the Test View, you can:

Creating and running test cases¶

To create a test case:

In the top right corner of the code editor, click Test.

At the top of the left pane, click Add, specify the case name and click OK.

In the dialog box, create a dialog branch you want to test:

In the Dialog pane, enter user queries that you want to test within one dialog branch.

To set the visual state for the dialog branch or a specific command, click the Set visual state icon and enter the visual state as JSON. For details, see Setting the visual state.

(For intent-driven queries) To validate Alan’s responses, in the left pane of the code editor, enable the Show Expected Responses option. Then hover over a user’s command in the branch and click the Add expected response icon. In the box below, enter one or more phrases with which the Agentic Interface must reply.

Note

To specify compound responses, use

ANDandOR. If a phrase contains an apostrophe, enclose it in double quotes, for example:hello OR (hi AND "what's up").To add another dialog branch, click the plus icon to the right of the dialog box and create the branch as described above.

After your test case is set up, in the bottom left corner of the code editor, click Run all. Alan AI will run the test case against the current version of the dialog script and mark the dialog branches as successfully passed or failed.

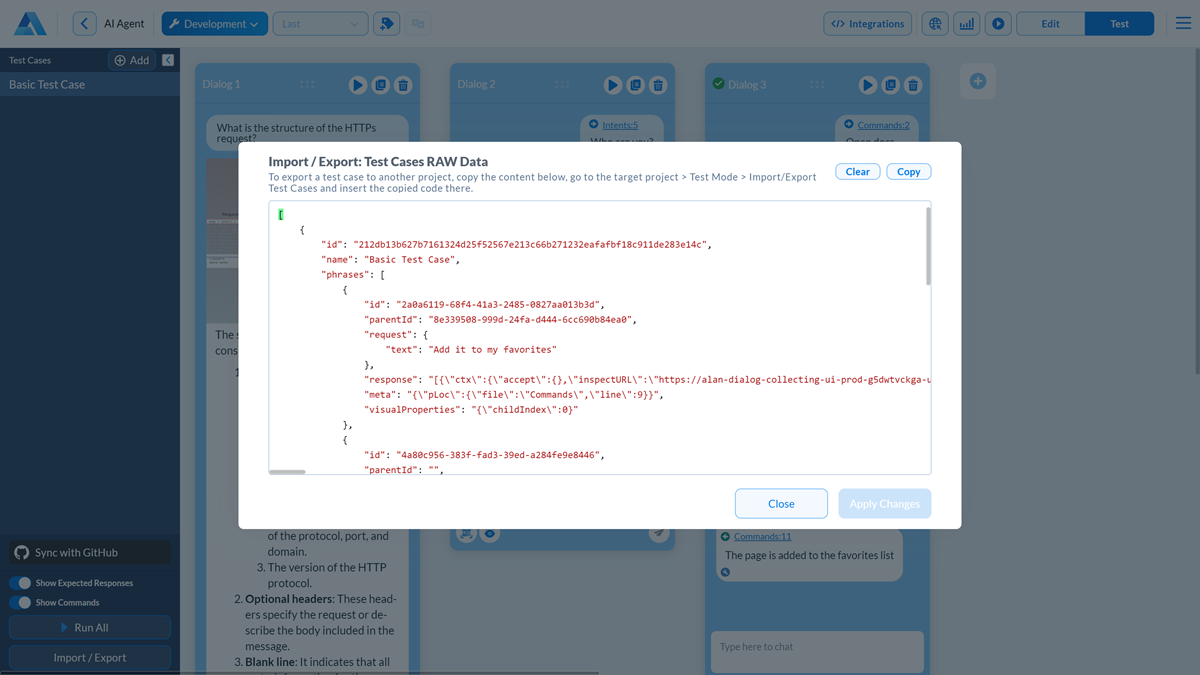

Exporting and importing test cases¶

To save a test case for later or transfer it to another project on your account, use the Import/Export option in Alan AI Studio.

In the left pane of the Test View, click Import/Export.

In the top right corner, click Copy to copy the raw test case data and save it in a file.

To import a test case to an Alan AI Studio project, in the Test View, click Import/Export, paste the copied data and click Apply Changes.

Note

If a test case with the same name already exists in your project, Alan AI will replace the existing test case with the imported one.

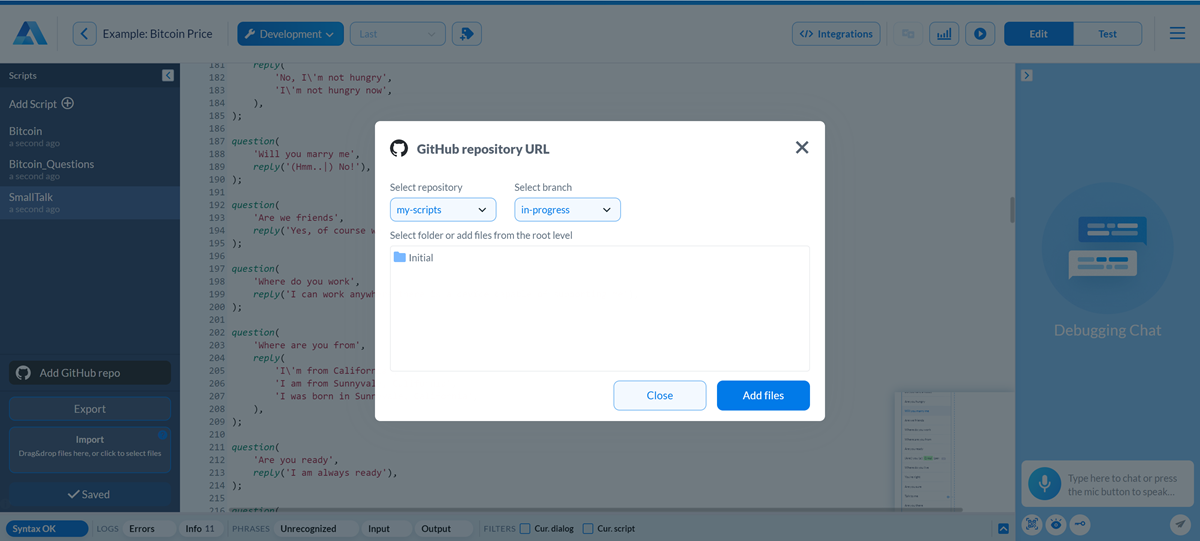

Saving test cases to GitHub¶

You can integrate an Agentic Interface project with GitHub and store the created test cases in a GitHub repository, just like dialog script files. This option can be helpful if you want to share test cases with other team members working on the Agentic Interface. For details, see Sharing and keeping scripts in GitHub.

Dialog scripts and test cases are saved to the same target. After you synchronize with GitHub, Alan AI pulls the content from the selected repository and branch and adds it to your project:

Dialog script files (JS) are added to the code editor

Test case files (TESTCASE) are added to the Test View

When you push changes to GitHub, Alan AI pushes changes made to dialog scripts and test cases. In case of a conflict, Alan AI will prompt you to sequentially resolve conflicts for dialog scripts and test cases.