Building a voice Agentic Interface for an Android Java or Kotlin app¶

With Alan AI SDK for Android, you can create a voice Agentic Interface and embed it to your Android app written in Java or Kotlin. The Alan AI Platform provides you with all the tools and leverages the industry’s best Automatic Speech Recognition (ASR), Spoken Language Understanding (SLU) and Speech Synthesis technologies to quickly build an Agentic Interface from scratch.

In this tutorial, we will build a simple Android app, add a voice Agentic Interface to it and test it. The users will be able to tap the voice Agentic Interface button in the app and give custom voice commands, and the Agentic Interface will reply to them.

YouTube

If you are a visual learner, watch this tutorial on Alan AI YouTube Channel.

What you will learn¶

How to add a voice Agentic Interface to an Android app written in Java or Kotlin

How to write custom voice commands for an Android app

What you will need¶

To go through this tutorial, make sure the following prerequisites are met:

You have signed up to Alan AI Studio.

You have created a project in Alan AI Studio.

You have set up the Android environment and it is functioning properly. For details, see Android developers documentation.

(If running the app on an emulator) All virtual microphone options must be enabled. On the emulator settings bar, click More (…) > Microphone and make sure all toggles are set to the On position.

(If running the app on a device) The device must be connected to the Internet. The Internet connection is required to let the Android app communicate with the dialog script run in the Alan AI Cloud.

Step 1: Create a starter Android app¶

For this tutorial series, we will be using a simple Android app with a tabbed layout. Let’s create it.

Open the IDE and select to start a new Android project.

Select Tabbed Activity as the project template. Then click Next.

Enter a project name, for example, MyApp, and select the language: Java or Kotlin.

The minimum possible Android SDK version required by the Alan AI SDK is 21. In the Minimum SDK list, select API 21. Then click Finish.

Step 2: Integrate the app with Alan AI¶

Now we will add the Alan AI agentic interface to the app.

Open the

build.gradlefile at the module level.In the

dependenciesblock, add the dependency configuration for the Alan AI SDK for Android. Do not forget to sync the project.build.gradle¶dependencies { /// Adding Alan SDK dependency implementation 'app.alan:sdk:4.12.0' }

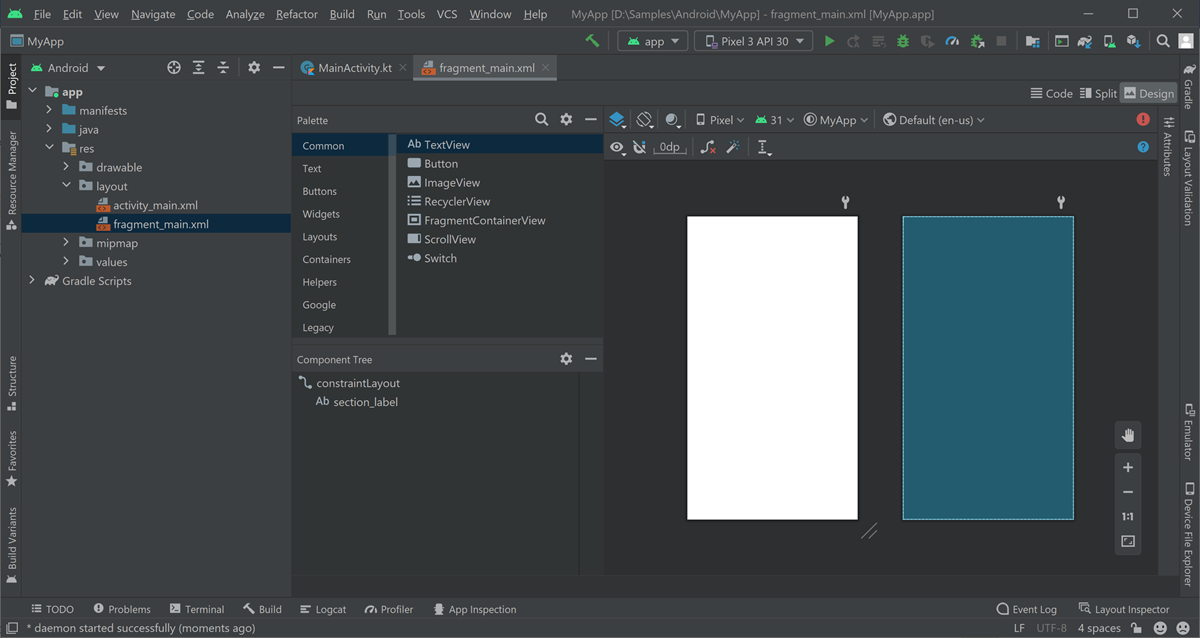

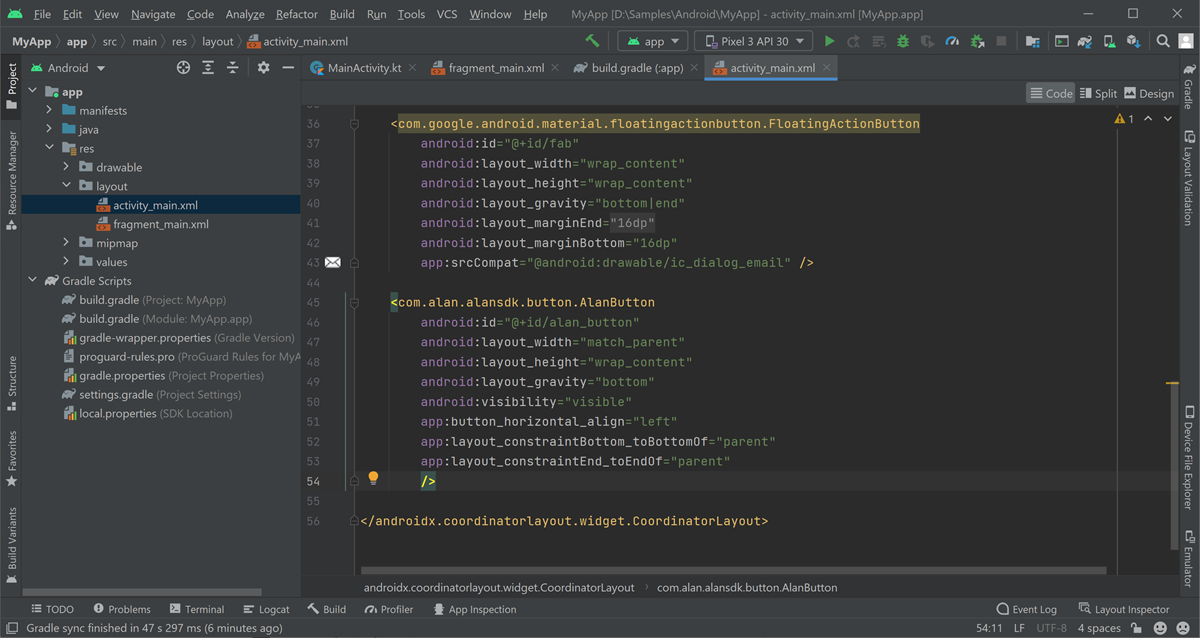

Next, we need to add the XML layout for the Alan AI agentic interface to the main app activity. Open the

main_activity.xmlfile, switch to the Code view and add the following layout to it:main_activity.xml¶<com.alan.alansdk.button.AlanButton android:id="@+id/alan_button" android:layout_width="match_parent" android:layout_height="wrap_content" android:layout_gravity="bottom" android:visibility="visible" app:button_horizontal_align="right" app:layout_constraintBottom_toBottomOf="parent" app:layout_constraintEnd_toEndOf="parent"/>

By default, the Alan AI agentic interface is placed in the bottom right corner of the screen. Use the

button_horizontal_alignproperty to change the button position toleftfor the button not to overlap with the floating button already available in the starter app.Here is what your

main_activity.xmlfile should look like:

Add the

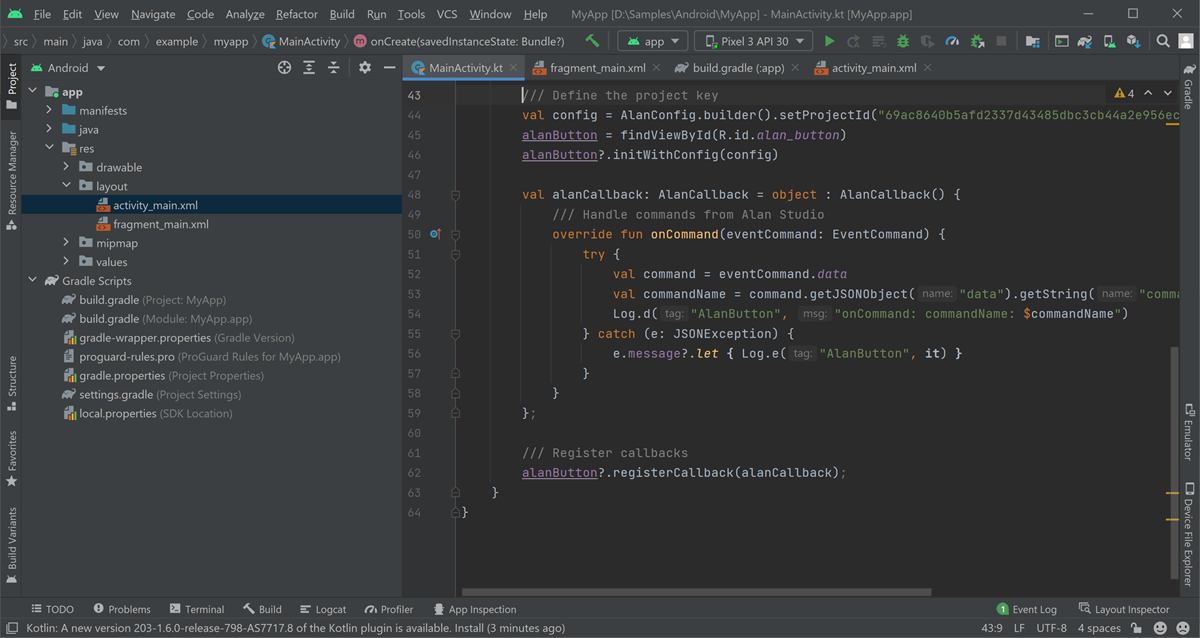

alanConfigobject to the app. This object describes the parameters that are provided for the Alan AI agentic interface. To do this, open theMainActivity.javaorMainActivity.ktfile and add the following code to theMainActivityclass:MainActivity.java¶public class MainActivity extends AppCompatActivity { /// Adding AlanButton variable private AlanButton alanButton; @Override protected void onCreate(Bundle savedInstanceState) { /// Defining the project key AlanConfig config = AlanConfig.builder().setProjectId("").build(); alanButton = findViewById(R.id.alan_button); alanButton.initWithConfig(config); AlanCallback alanCallback = new AlanCallback() { /// Handling commands from Alan AI Studio @Override public void onCommand(final EventCommand eventCommand) { try { JSONObject command = eventCommand.getData(); String commandName = command.getJSONObject("data").getString("command"); Log.d("AlanButton", "onCommand: commandName: " + commandName); } catch (JSONException e) { Log.e("AlanButton", e.getMessage()); } } }; /// Registering callbacks alanButton.registerCallback(alanCallback); } }

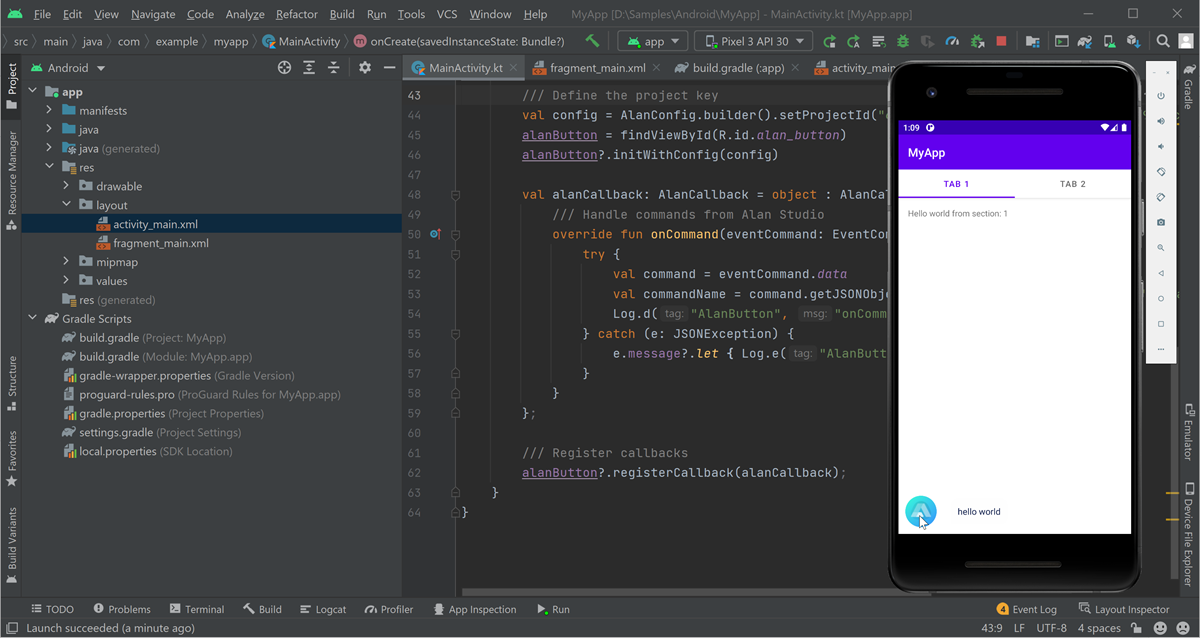

MainActivity.kt¶class MainActivity : AppCompatActivity() { /// Adding AlanButton variable private var alanButton: AlanButton? = null override fun onCreate(savedInstanceState: Bundle?) { /// Defining the project key val config = AlanConfig.builder().setProjectId("").build() alanButton = findViewById(R.id.alan_button) alanButton?.initWithConfig(config) val alanCallback: AlanCallback = object : AlanCallback() { /// Handling commands from Alan AI Studio override fun onCommand(eventCommand: EventCommand) { try { val command = eventCommand.data val commandName = command.getJSONObject("data").getString("command") Log.d("AlanButton", "onCommand: commandName: $commandName") } catch (e: JSONException) { e.message?.let { Log.e("AlanButton", it) } } } }; /// Registering callbacks alanButton?.registerCallback(alanCallback); } }

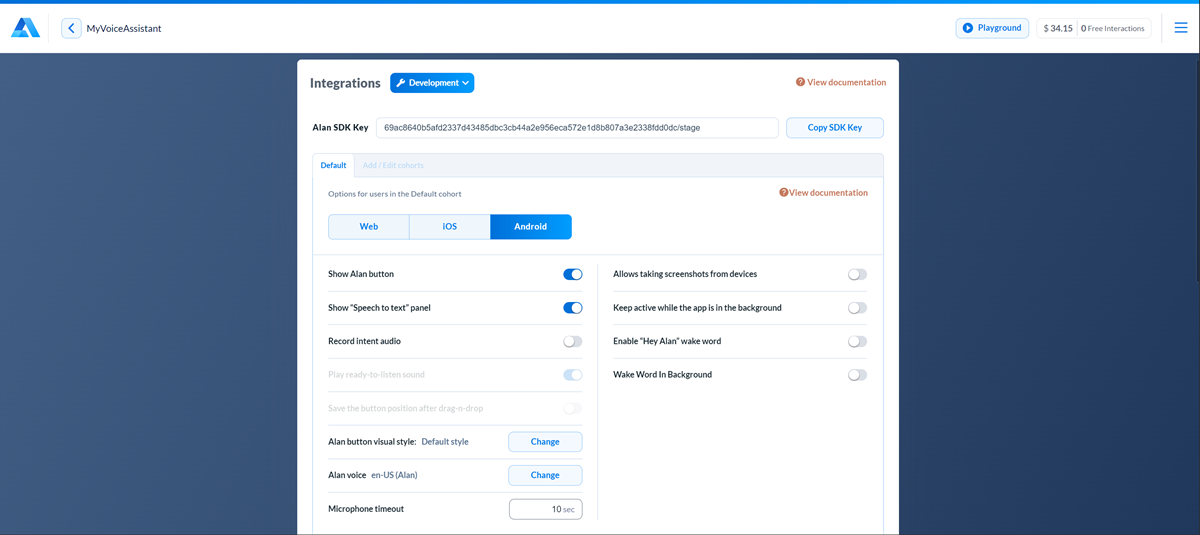

In

setProjectId, we need to provide the Alan AI SDK key for our virtual Agentic Interface project. To get the key, in Alan AI Studio, at the top of the code editor click Integrations and copy the key value from the Alan SDK Key field.

In the

MainActivity.javaorMainActivity.ktfile, import the necessary classes. Here is what your main activity file should look like:

Run the app.

After the app is built, tap the Alan AI agentic interface in the app and say: Hello world.

However, if you try to ask: How are you doing? The Agentic Interface will not give an appropriate response. This is because the dialog script in Alan AI Studio does not contain the necessary voice commands so far.

Step 3: Add voice commands¶

Let’s add some voice commands so that we can interact with Alan AI. In Alan AI Studio, open the project and in the code editor, add the following intents:

intent(`What is your name?`, p => {

p.play(`It's Alan, and yours?`);

});

intent(`How are you doing?`, p => {

p.play(`Good, thank you. What about you?`);

});

Now tap the Alan AI agentic interface and ask: What is your name? and How are you doing? The Agentic Interface will give responses we have provided in the added intents.

What’s next?¶

You can now go on with building a voice Agentic Interface with Alan AI. Here are some helpful resources:

Go to Dialog script concepts to learn about Alan AI’s concepts and functionality you can use to create a dialog script.

In Alan AI Git, get the Android Java example app or Android Kotlin example app . Use this example app to see how integration for Android apps can be implemented.

Have a look at the next tutorial: Navigating in an Android app with voice.