Static corpus¶

The Q&A service lets you create a Q&A Agentic Interface that uses static data sources: company website pages, product manuals, guidelines, FAQ pages, articles and so on.

You can define the following types of static corpuses in the dialog script:

Web corpus: retrieve information from website pages and PDF files available online

Text corpus: use plain text as an information source

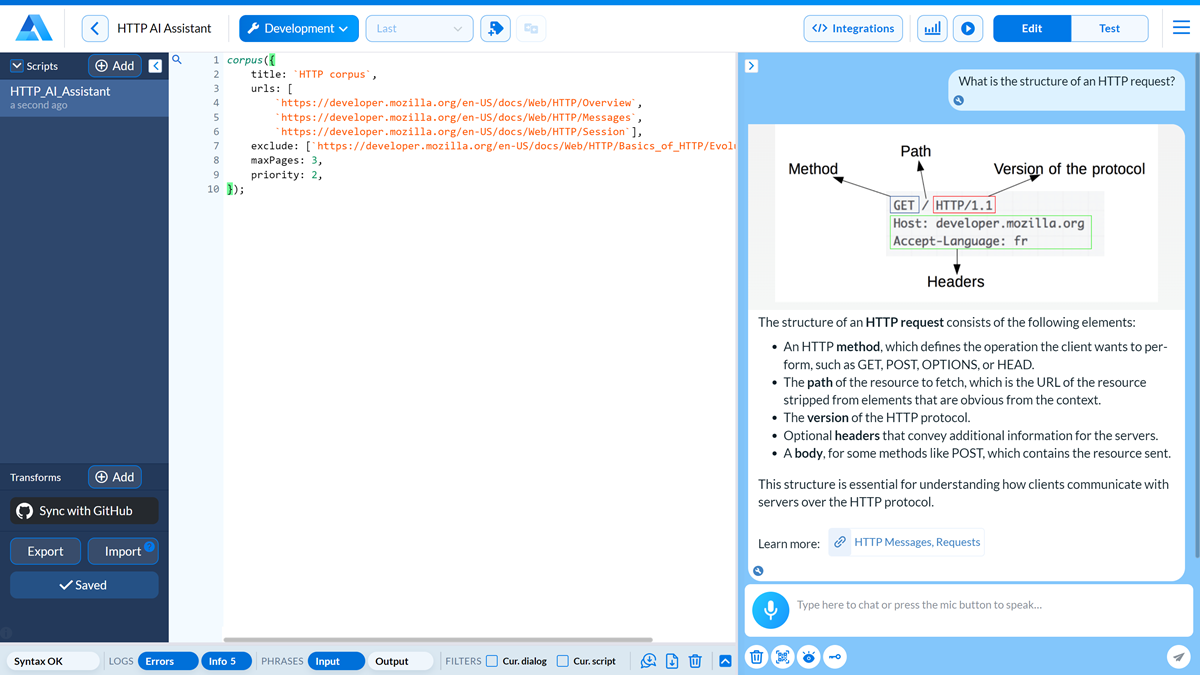

Web corpus¶

To define a web corpus for your Q&A Agentic Interface, use the corpus() function.

corpus({

title: `HTTP corpus`,

urls: [

`https://developer.mozilla.org/en-US/docs/Web/HTTP/Overview`,

`https://developer.mozilla.org/en-US/docs/Web/HTTP/Messages`,

`https://developer.mozilla.org/en-US/docs/Web/HTTP/Session`],

auth: {username: 'johnsmith', password: 'password'},

include: [/.*\.pdf/],

exclude: [`https://developer.mozilla.org/en-US/docs/Web/HTTP/Basics_of_HTTP/Evolution_of_HTTP`],

query: transforms.queries,

transforms: transforms.answers,

depth: 1,

maxPages: 5,

priority: 0,

});

Corpus parameters¶

Name |

Type |

Required/Optional |

Description |

|---|---|---|---|

|

string |

Optional |

Corpus title. |

|

string array |

Required |

List of URLs from which information must be retrieved. You can define URLs of website folders and pages. |

|

JSON object |

Optional |

Credentials to access resources that require basic authentication: |

|

string array |

Optional |

Resources to be obligatory indexed. You can define an array of URLs or use RegEx to specify a rule. For details, see Corpus includes and excludes. |

|

string array |

Optional |

Resources to be excluded from indexing. You can define an array of URLs or use RegEx to specify a rule. For details, see Corpus includes and excludes. |

|

function |

Optional |

Transforms function used to process user queries. For details, see Static corpus transforms. |

|

function |

Optional |

Transforms function used to format the corpus output. For details, see Static corpus transforms. |

|

integer |

Optional |

Crawl depth for web and PDF resources. The minimum value is 0 (crawling only the page content without linked resources). For details, see Crawling depth. |

|

integer |

Optional |

Maximum number of pages and files to index. If not set, only 1 page with the defined URL will be indexed. |

|

integer |

Optional |

Priority level assigned to the corpus. Corpuses with higher priority are considered more relevant when user requests are processed. For details, see Corpus priority. |

Note

Mind the following:

Make sure the websites and pages you define in the

corpus()function are not protected from crawling. The Q&A service cannot retrieve content from such resources.The indexing process may take some time. To check the progress and results, use the Alan AI Studio logs.

The maximum number of indexed pages depends on your pricing plan. For details, contact the Alan AI Sales Team.

Text corpus¶

To define a text corpus for the Q&A Agentic Interface, add plain text strings or Markdown-formatted text to the corpus() function:

corpus({

title: `HTTP corpus`,

text: `

# Understanding **async/await** in JavaScript

**async/await** is a feature in JavaScript that makes working with asynchronous code easier and more readable. It allows you to write asynchronous code that looks and behaves like synchronous code, making it easier to follow and understand.

## How Does **async/await** Work?

### **async** Keyword:

- The **async** keyword is used to declare a function as asynchronous.

- An **async** function returns a **Promise**, and it can contain **await** expressions that pause the execution of the function until the awaited **Promise** is resolved.

### **await** Keyword:

- The **await** keyword can only be used inside an **async** function.

- It pauses the execution of the function until the **Promise** passed to it is settled (either fulfilled or rejected).

- The resolved value of the **Promise** is returned, allowing you to work with it like synchronous code.

## Why Use **async/await**?

### Readability:

- By using **async/await**, you can avoid the complexity of chaining multiple **.then()** methods when dealing with Promises.

- Your code looks more like traditional synchronous code, making it easier to read.

### Error Handling:

- Error handling with **async/await** is simpler and more consistent with synchronous code.

- You can use **try/catch** blocks to handle errors.

`,

query: transforms.queries,

transforms: transforms.answers,

priority: 0,

});

Name |

Type |

Required/Optional |

Description |

|---|---|---|---|

|

string |

Optional |

Corpus title. |

|

plain text or Markdown-formatted strings |

Required |

Text corpus presented as plain text strings or Markdown-formatted strings. |

|

function |

Optional |

Transforms function used to process user queries. For details, see Static corpus transforms. |

|

function |

Optional |

Transforms function used to format the corpus output. For details, see Static corpus transforms. |

|

integer |

Optional |

Priority level assigned to the corpus. Corpuses with higher priority are considered more relevant when user requests are processed. For details, see Corpus priority. |